Participants in the workshop should follow these instructions to post a 100-200 word reading reflection before Thursday, March 23, 2017.

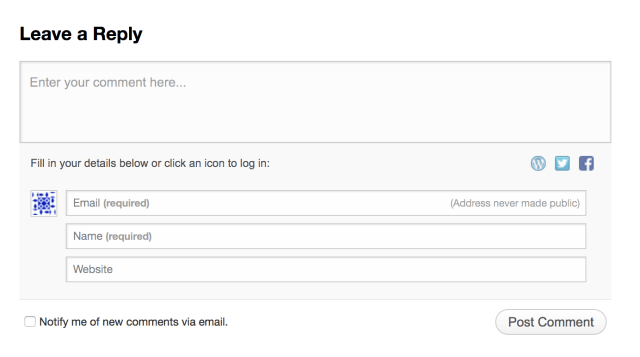

Click on “Leave a Comment” under this post as illustrated below:

Type your reply into the comment box that appears. WordPress will ask for your name (which will be displayed) and your email address (which will not be displayed).

Participants are encouraged, though not required, to comment in reply to other responses, and begin the dialogue that will take place on Friday and Saturday.

Participants are encouraged, though not required, to comment in reply to other responses, and begin the dialogue that will take place on Friday and Saturday.

Gailey Article:

My main interest in this article lies in the focus on editorial decision-making, and the importance of transparency to users. The examples given, especially the visual examples from children’s literature, illustrate ways in which TEI can expand our searchable knowledge base, but also illustrate the importance of a human editor making decisions and selecting a tag set — they underline the very hand-written nature of TEI, which is something we will be talking about in great detail this weekend.

Follow-up question, if anyone wants to think about it: What parallels are there between Gailey’s work and decision-making process and that of editing a medieval manuscript?

LikeLike

The most striking aspect of the Gailey article for me was the discussion on regularizing language on texts without corrupting the original character or meaning of the document. That they have decided to normalize unusual words is significant when considering that spelling and vocabulary can be very fluid on older documents. The use of the tag would seem to render many documents infinitely more searchable. In addition, the decision on how to mark-up documents that only inexplicitly refer to something (such as Lincoln references in Whitman poems) is an interesting problem, as there would need to be a consensus that the reference actually exists. How does one resolve whether it is appropriate to tag something with an ambiguous reference or defer for the moment, thus potentially limiting the exposure of a document to an interested party?

LikeLike

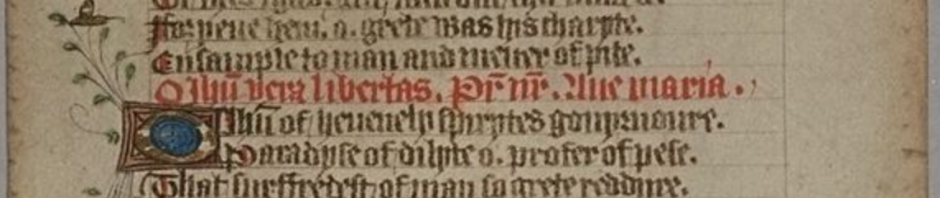

I was also interested by the discussion of regularizing language and what that would mean for medieval texts in particular. Does all Latin become regularized into classical Latin? So that, in addition to tidying up orthographical inconsistencies within the text, would we change, for example, the internal ‘c’s into ‘t’s or the ‘e’s into ‘ae’s? And, perhaps more importantly, how does the user know which spelling conventions to follow when searching?

The article does a good job of pointing out the political implications of such standardization, and I found the solution of providing the regularized form alongside the original form (and not calling one ‘corrected’) sufficient. I’m curious, however, about the practicalities of this when we’re not dealing with regularizing into a modern standard language that has established style and usage guides. I expect medievalists working in DH have established a consensus, and I expect this will be duly covered in the workshop, but I thought I’d raise the issue nonetheless.

LikeLike

I’ve found Gailey’s description of digital editing very inspiring.

In particular, I was struck by the paradoxical polarization of reading practices that she describes in the first section. As she explains, the taggers and the transcribers of a digital edition often encounter a richer, more productive task than those who work on a regular edition; they are prompted to ask unconventional questions, and to consider texts and artifacts in new ways. On the other hand, the users of digital editions are in most cases superficial “searchers,” discouraged from engaging with the text in innovative ways.

But is “heavy editing” (non-standard and interpretive editing) a true answer to this imbalance? Doesn’t it instead further disempower end-users, by providing them with ready-made searchable patterns (tags that have already been predicted by editors) and second-hand discoveries? The second solution that she proposes sounds more convincing. Instead of making editing “heavier,” we should keep thinking about ways (tools, interfaces) that can turn digital reading and searching – for the end-user – into an active process of intellectual exploration.

LikeLike

It seemed the article was expressing a sense of dissatisfaction at the disparity between the amount of work required to put together a collection of digitized texts and the rather limited use that they are put to. It begs the question whether the architecture of tagging could be improved on to enable more “creative” searches, closer in nature to “close reading”. It proposes the tagging of “nonliteral” content, which can be problematic, since multiple readings are not accepted in the protocol. I don’t think that digital searches lack the “serendipitous” nature of more traditional searches. The problem lies with the lack of “digital literacy” of the users and seems easier to solve by developing guidelines and tutorials in order to explore the full potential of online resources for more traditional minded forms of research.

As for the standardization of graphemes, the OED allows for searches according to multiple forms of the same word, so maybe what is needed is a combination of tagging and that multivalent search.

LikeLike

Like Gianmarco, I was also interested in Gailey’s discussion of interpretive editing. Should a digital edition simply make a text as accessible as possible, or should it also guide the reader through a reading of the text? The second option, such as marking “Captain” as “Abraham Lincoln,” might be immediately useful for students, but it might also limit their own interpretations. Suddenly, if “Captain” is marked authoritatively as “Abraham Lincoln,” then other interesting readings of “Captain” might be stifled if students feel there is nothing else to say about the word “Captain.” At the same time, these kind of interpretive glosses seem similar to glosses in medieval manuscripts. And though medieval glosses sometimes provide rather confident interpretations, this doesn’t stop us from finding new interpretations separate, alongside, or in contrast to them. In fact, the glosses themselves often become another medieval text worth analyzing and considering. Perhaps the answer is simply creating different digital editions of manuscripts, just as we create different print editions. Certain editions could be more open to exploration (light on interpretive tagging) while others more geared towards instruction (heavy on interpretive tagging).

LikeLike

I’m also excited about the level of engagement with a text that mark-up decisions take. It seems like a fantastic way to approach familiar texts with fresh eyes, and so much research work could actually get done in the process of building a corpus of texts! Depending on the audience envisioned for a digital medieval manuscript project, I wonder if more editing, and consequently more gate-keeping, might be required. The barriers — languages, paleography, codicology — for doing meaningful research with manuscripts are extremely high. Beyond issues of language or spelling, the challenges of how to handle allusion, allegory, quotation, or reference must be omnipresent, rather than occasional hurdles. How much more of a medieval manuscript, compared with a 19th century children’s book, has to be glossed to make it meaningful to a 21th century reader?

LikeLike

I have two reactions. First, I’m not too concerned with under-tagging, as in the example of the Whitman “Captain” poem and whether to indicate that “Captain” refers to Lincoln. I would think that a thorough searcher looking for literary references to Lincoln should be searching terms that commonly stand in for a president, which would include words like captain, chief, commander, etc. Second, I think that we have to always be aware that the acts of selection of texts to edit, and tagging either form or content, are acts that alter both their interpretation and their prominence relative to other sources.

LikeLike

I have a couple of questions/thoughts after reading this article:

First, in terms of markup/tagging, what is the difference between the regular expression syntax available in Microsoft Word’s own wildcard search and the XML+TEI combination?

Second, while Gailey emphasizes the popularity of TEI in American digital humanities, is this the most commonly used tag set system in existing medieval studies databases? Or, how can we know what is the most commonly used tagging/coding apparatus in our own specific area of interest?

Third, the “optional normalization of text” and the “” tag that Gailey talks about in the article seems to me a great way to provide alternative transcription of certain words in manuscripts and make it possible for database users to locate them. For instance, “novit” and “nouit” can nest in one unit, and users can find the word using either “novit” or “nouit”. However, it then leads to the problem that we need to come up with further functions to turn off this option in users’ research, so that users who are looking for specific scribing/spellings of words will not be distracted.

Fourth, the problem of “error-tolerant rate in searching” is probably beyond the topic of this article. However, I am curious to know how existing databases, especially in the area of medieval studies, deal with this problem.

Fifth, as far as I am concerned, “heavy editing/commentary” and “user-annotion” functions can be very helpful for digital projects concerning the transcription of manuscripts (especially medieval canon law manuscripts). This combination has the potential to create a space for scholarly conversations, which will be mutually beneficial.

LikeLike

To follow up, my first question concerning the relationship between regular expression and XML is touched upon in the article “What is XML and why should humanists care”. However, the author does not address the difference clearly. In my third question I was talking about the “choice” tag. I added pointy brackets around the word “choice” as it is in the article, and the whole unit disappeared in my post. I guess it was because WordPress was considering it as a HTML tag? By the way, I just noticed that the time zone of this website seems to be a couple of hours ahead of EST. I did submit this post before Mar 23 🙂

LikeLike